Workforce creates and coordinates autonomous agents that pick up tickets, write code, review each other's work, and merge — powered by Claude, Gemini, Grok, and more — model-agnostic by design. Not one assistant. An entire team.

ENGINEERED FOR AUTONOMY

PLATFORM

Not a copilot. A coordinated engineering team.

Workforce agents pick up tickets, write PRs, review each other's work, and merge when CI passes.

HOW IT WORKS

Agents coordinate task handoffs, share context, and work in parallel — like a well-run engineering team, not a single assistant waiting for prompts.

"Workforce gives a small team the output velocity of a team three to four times its size. It's a force multiplier."

CTO

HEALTHCARE PLATFORM

POWERED BY THE BEST AI MODELS. COMPETING WITH NONE OF THEM.

Claude writes code. So does Gemini. Workforce puts them to work.

Anthropic Claude

Best-in-class tool use and function calling. Handles complex multi-step engineering tasks with the highest reliability.

Google Gemini

Massive context windows for large codebase understanding. Cost-efficient for routine analysis and documentation tasks.

xAI Grok

Fast inference for high-volume operations. Automatic failover ensures agents never stop if one provider goes down.

Workforce orchestrates multiple models as a coordinated engineering team. The model is the engine. Workforce is the driver.

UNDER THE HOOD

The architecture advantage

Four pillars that make Workforce production-grade

5 layers of memory active

memory since

Day 1 — Feb 2026

CAPABILITIES

Deep integrations, not shallow wrappers

Workforce connects to the tools your team already uses — managing the full lifecycle in each, not just reading data.

INTEGRATION

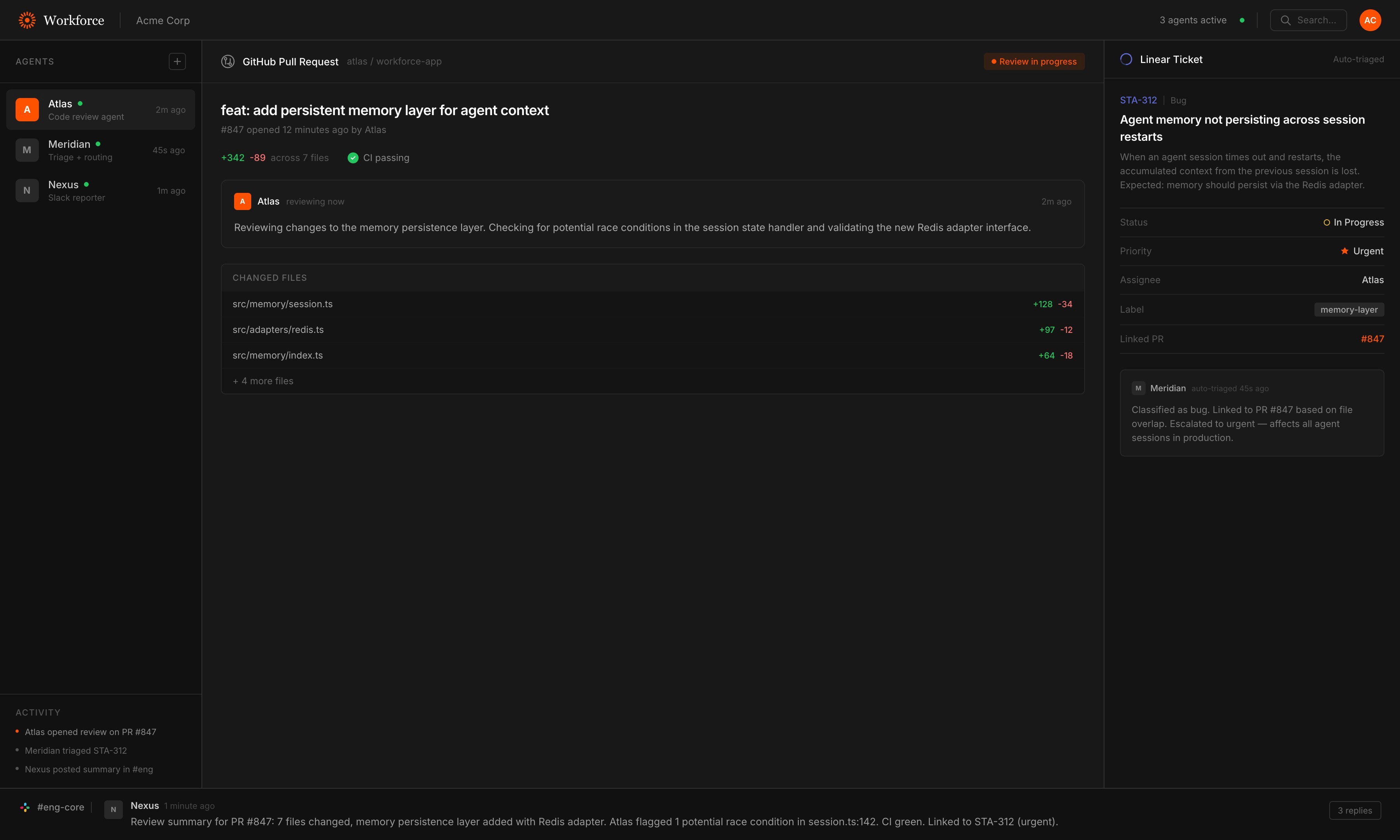

GitHub

Full PR lifecycle — clone, branch, review with inline comments, CI checks, and SHA-pinned merge. Agents manage the complete workflow autonomously.

INTEGRATION

Linear

Multi-workspace issue tracking with auto-triage, bulk operations, linked relations, and automatic prefix resolution across workspaces.

INTEGRATION

Slack

Socket Mode presence with per-channel engagement controls. Thread-aware replies, emoji reactions, file uploads, and multi-workspace support.

INTELLIGENCE

Knowledge Graph

Indexes your entire codebase into entities and relationships. Semantic search, dependency traversal, impact analysis — all queryable in natural language.

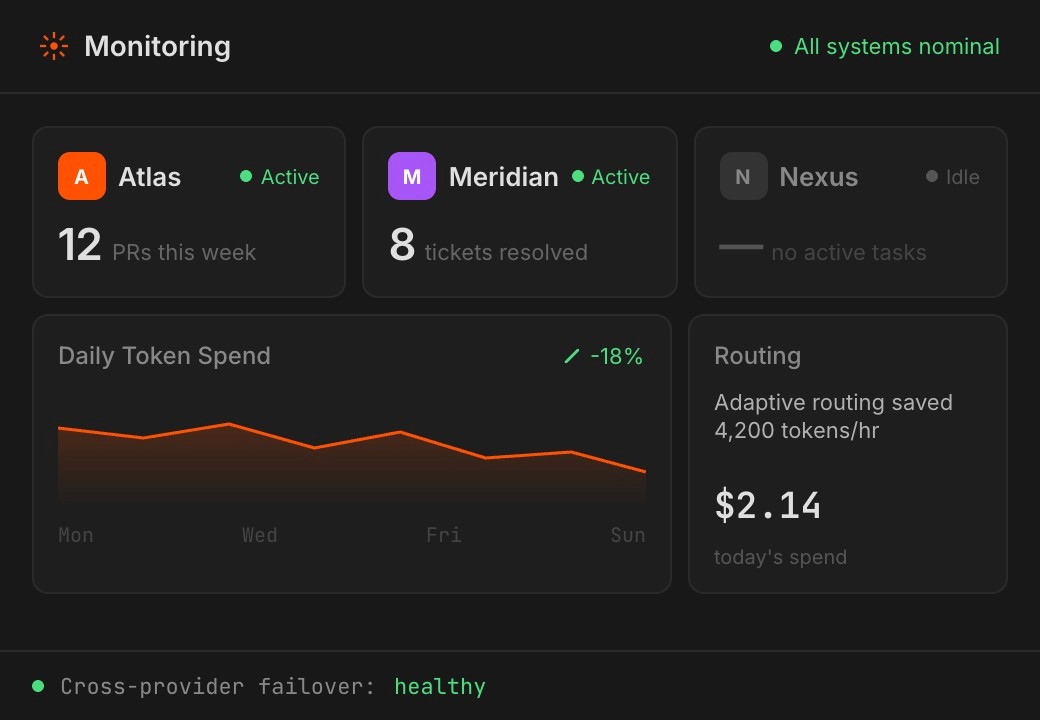

BY THE NUMBERS

Production-grade at scale

Workforce isn't a prototype — it's battle-tested infrastructure running across production codebases today.

7,600+

code entities indexed across production repos

26,000+

relationships mapped in the knowledge graph

40–60%

cost savings via adaptive model routing

IN PRODUCTION

Running on real codebases today.

We don't disclose customer names — but here's where Workforce is shipping code right now.

Want to be next?

We're onboarding a small number of teams each quarter. If you're running a codebase that needs more engineering throughput, let's talk.

BUILT WITH RUST · SHIPS IN PRODUCTION

GET STARTED

Workforce integrates with your existing stack — no new UI to learn, no workflow to change.

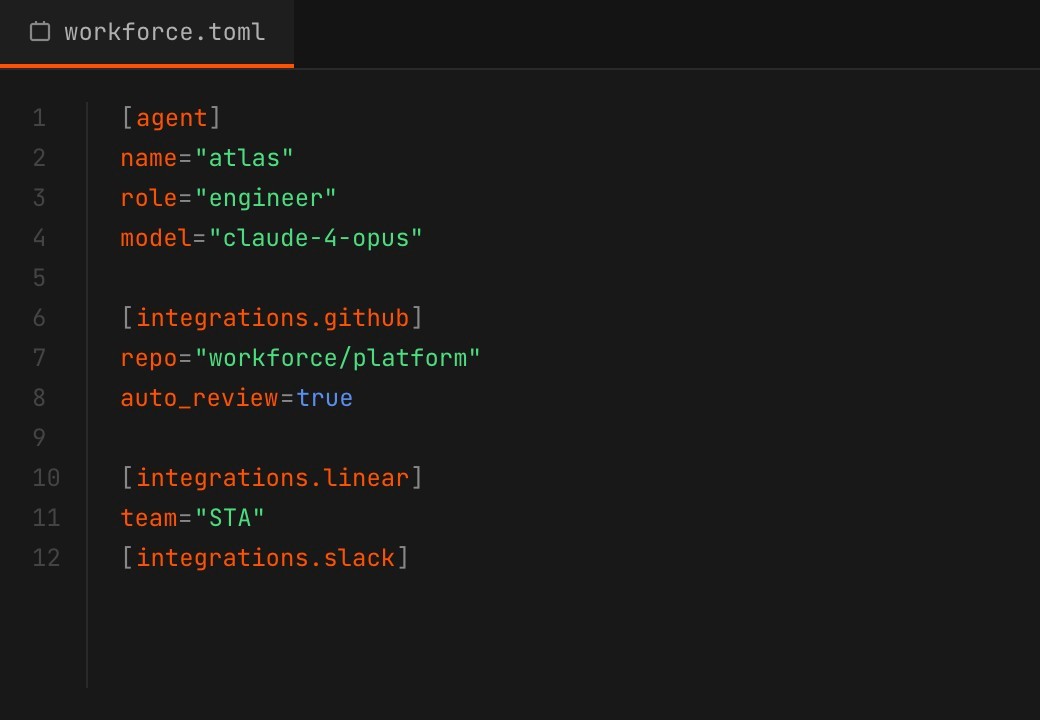

STEP 1

Configure

Define agents in a single TOML file. Give them identity, capabilities, memory, and tool access. Connect to GitHub, Linear, and Slack.

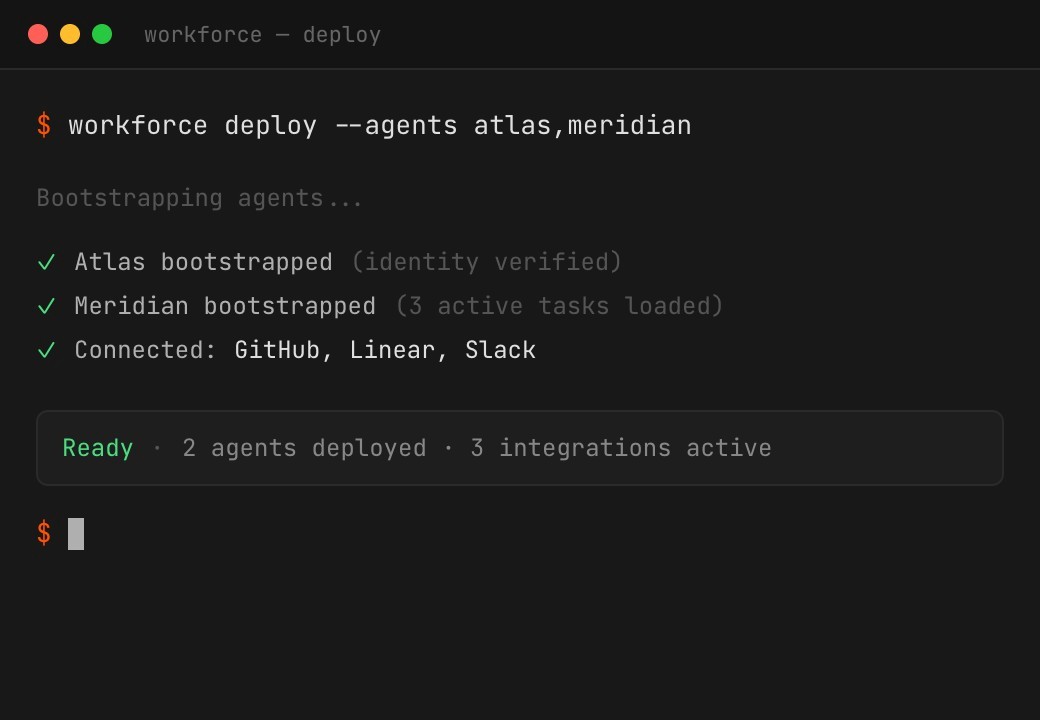

STEP 2

Deploy

Agents bootstrap themselves — reading identity files, checking recent work, scanning for active tasks. If there's unfinished work, they resume. If clear, they wait for instructions.

STEP 3

Scale

Add agents as workload grows. Spin up sub-agents for parallel execution. Adaptive routing optimises cost automatically. Track per-ticket costs in real time.

INSIGHTS

Thinking on AI engineering

Perspectives on autonomous agents, orchestration, and the future of software development.

EARLY ADOPTERS

What engineering leaders are saying

Early adopters on what it's like to have AI agents shipping alongside their team.

FAQ

How is Workforce different from GitHub Copilot or Cursor?

Copilots autocomplete your code line by line. Workforce agents autonomously pick up tickets from Linear, understand your codebase through a knowledge graph, write pull requests, review each other's work, and merge when CI passes. They're teammates, not autocomplete.

Is Workforce self-hosted?

Yes. Workforce runs entirely in your infrastructure. Your code, your data, your control. This is critical for organisations handling sensitive data or operating in regulated industries.

What LLM providers does it support?

Workforce supports Claude, Grok, and Gemini with automatic cross-provider failover. If your primary provider goes down, agents seamlessly switch to the next available model. Adaptive routing also optimises cost by matching task complexity to model capability.

How long does deployment take?

Most teams are running their first agents within days. Agents are configured in a single TOML file, connect to your existing GitHub, Linear, and Slack, and bootstrap themselves automatically.

What programming languages does it support?

Workforce is built in Rust and is language-agnostic — agents can work with any codebase. The knowledge graph indexes functions, structs, traits, and modules across your entire repo.

How does the memory system work?

Workforce uses five layers of persistent memory — working, episodic, semantic, team, and organisation — backed by both filesystem and vector store. Agents actively curate their long-term memory during scheduled heartbeats, reviewing daily notes and distilling what matters.

What does licensing look like?

We offer flexible licensing models including annual platform licenses with implementation support. Book a demo and we'll walk you through pricing based on your team size and needs.